The Art of not being Hype.

It always feels good to follow the rhythm of the tweetosphere, to use the latest tool or framework (still in alpha version), to be hype, to talk about it, to blog about it: you need to use this revolutionary bleeding-edge thingy.

Your ops team will love you. (taken from experience)

Beyond that, in the real-world, when you have a serious business, systems running in production, when you can’t have any downtime, when you need to monitor everything and be responsive: you can’t play with your technology stack, you can’t be hype. You could burn yourself and the whole company at the same time. Then go bankrupt the next day (OK, maybe not).

Over time, I’ve accumulated tons of questions to ask myself and my team when we want to install a new tool/software/framework. It’s not because Airbnb uses it that we should, right? We don’t have the same needs. Let’s put the Cargo cult out of the way.

Some questions are not relevant according to what is this “new thing” to install: something front-end oriented does not have the same concepts as something backend-oriented. Some pieces are common but not all of them. You get the idea.

Maieutics, here we come!

You’ve found a “new“ cool thing you want to use, let’s think about it first.

What is exactly the need?

- The first question is the most important one : Do you know exactly what is the need? What are the use-cases? Are they precisely defined?

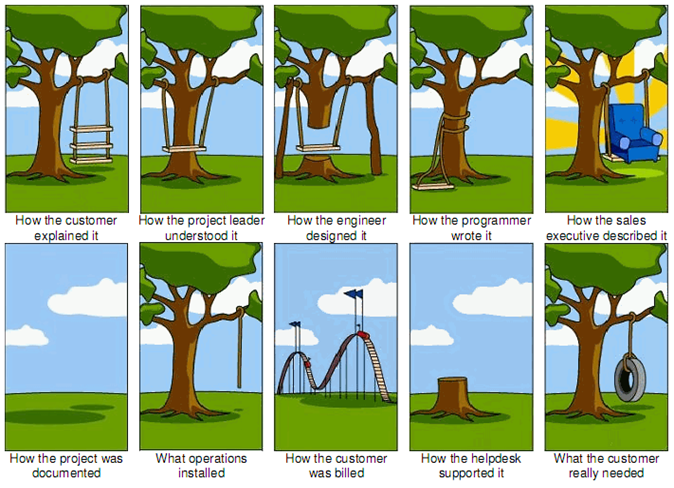

Often underestimated, we know a bit what we (the boss/the customer) want but without all the details, and we go blindly into the wall thinking we’ll be able to twist the need to adapt to the new tool if needed. Think twice about it. If you don’t have all the details, refuse to work and go get them.

- Does the tool answer exactly the need? Is it like using a tank to kill a fly? Is it the proper tool for the need?

“I want a blog! Let’s do some reactive functional programming, use some monads, get the UI to 90fps, put the content into HDFS, and query it through Impala.” No? Really do estimate what are the capabilities of the tool, if it was created for your use-case or for something different or larger. Using a tool because it does “more” is not necessary an advantage. A tool has an history, a raison d’être.

The smallest the API surface, the easiest it is to think about.

- Is this tool is hype right now? Do you feel it’s really something strong that you need, or are you just getting sucked into?

The hypeness is the enemy of the reflection. Try to think outside of the box to see if you really need it. Escape hypeness. Take a step back.

- Are you sure you don’t have already something similar in your stack?

Often, we don’t realize we already work with something similar because the use-case is different (but our tool can already handle it), or because we didn’t know all its features.

Know what your existing technology stack is capable of.

- Is is production-ready?

Yes, Github projects are cool. Yes, tons of new apps are created every day and have nice websites. No, they are often not production ready for you.

They generally answer to some use-cases a company or person had and they built a tool for it. Does it match yours? Is it stable? Is it bug free (or identified)? What about the documentation? Can you monitor it? Does it have a proper logging strategy? Does it expose metrics? Is it configurable? This is the definition of production-ready. All this post is mostly related to this question.

- What’s the cost?

Free is always better. But free is not always the best.

- Did you check the licence or the patents?

You wouldn’t want a surprise on this side. “The license granted hereunder will terminate, automatically and without notice, if you […lots of stuff…]”

Is is clear how to deal with it on a daily basis?

- How to install?

Is it clear for you? Is it just next, next, finish or tar zxvf then run? Do you need to install some frameworks, some new language platform? Do you exactly know the impact of the installation on the system?

- Can we use Docker to make it run?

Using Docker is an ease when it’s come to installation because all dependencies are contained. The impact surface is limited, it’s easier to think about it.

- Should it run on a standalone server? What about network requirements?

Some tools need their own server to run full steam ahead or to not cannibalize other processes resources. Other processes consume a lot of network IO. That must be taken into account, some systems/clusters could be largely stressed because of one component.

- How to work with it in dev mode? How to work with it in staging mode? What about the DX?

Don’t forget the developers want to a good experience when working with tools. If there is no interface, or worse, an ugly and non-reactive interface, they can “reject” it.

A developer is like life: it always find its way.

- Can it be use offline?

Let’s say I take the plane, does it still work on my laptop? I used to take the train a lot, without any connection. Please make it work.

- Does it need to be in-sync with something?

Some tools need to be sync’d with some outer storages. That can rise a lot of questions and issues. (network partition, server crashs)

- Does it need to use a third-party service or some other dependencies to work?

Often, a tool or framework is connected to something else to add some value to it, or to have some storage, to be interconnected. If that’s the case, all those questions needs to be pondered with those dependencies.

- Does it need and have an admin console? Or do we need another software to administrate it?

It’s cool to install something, but can you get insights when it’s working? Such as some live debugging tool, some integrated monitoring admin, something? You never want to use something blindly. You must not.

- Does it work solo on the long run without intervention/maintenance?

Can the whole company take one month of holidays and the tool will still run properly? No intervention needed?

Should it evolve over time?

- Is the tool or framework evolvable, does it has plugins? Does it has a nice ecosystem?

As we said, maybe you already have something that can get the job done. But maybe it needs a plugin to make it so. And does your new toy have this ability? When it’s come to pick one of two tools, that could help.

- Is there any tool that can be used on top of it?

Frameworks can offer complexities in their API, and often, we can find “higher-order frameworks” that offers a different API, more specific, but using the complex API under the hood. Often in backend systems, you have a tool that does its job to ingest data, and you want a visualization tool on top. A stack of tools.

- Will we be able to reuse it in the future?

It’s nice to answer some need at a given point in the present. But it’s cooler to reuse it later to answer to some more business needs.

- Is it maintained? Does it has a strong community?

How many stars on Github? :troll: Issues/PR? Google Group? Last message, last fix? Does it have an IRC/Slack? People are asking stuff on StackOverflow? Is this a one person project, one company project? Do you know? Quickly check on Google to feel its vibrations.

- Is the code clear?

If it’s open source, quickly check the code. I personally like to enforce a nice code style and organization. I feel confident when it’s well organized, I can read it, even if it’s all buggy inside. When it’s working but with a lack of style and/or organization, I can barely read it, I don’t know how it’s working exactly, I’m not that confident. It’s not trustful. What if you need to debug it?

- If needed, could we make our way into the code and do a Pull Request?

It happens. Sometimes, you discover a problem, or you want to add an option. It’s always preferable to know the language and understand the code source. Therefore, open-source helps tremendously.

- Are we going to be stuck with this tool forever? What if we need to change later, are we going to be in trouble?

Often, we realize the tool or framework is not that great or the need changed, but we already spent times on it. But it’s better to switch to something else later than never. Will it be complex to remove? Is it going to take root on all the servers?

- Is the team is going to understand it? What if I get hit by a bus tomorrow? Are they going to be okay with the new tool?

The famous bus hit test. Is the tool something you want and only you care and will understand, or the team is behind and it’s not a problem?

Does it impact business?

- Is it real-time or batch based? Is it what the users expect?

When it comes to back-end and especially data processing, this big question arises because it can directly impact the end-user. The tool can answer your need “processing the data” but at what cost? If the user needs to wait hours to get it, maybe that unacceptable. Know your business.

- Is this going to impact the users experience?

It’s especially true for the front-end frameworks: if you add a framework that weights 3MB of JavaScript, which slows down the whole website, and deteriorate the user experience, you are going to have a hard time. If you add a feature to expose some dashboards, but are slow as hell, better not to do it.

Build a performance budget that must be validated for each build. Run automated performance tests.

- Does it sustain under load?

Back-end systems must be built to handle some loads. If you use a new HTTP framework that can’t handled 100 concurrent users / seconds, you won’t make it. Automate load-tests. Not every company needs to handle this throughput, but you never know, maybe you’re the next Airbnb.

- Are deciders going to be able to use it to take decisions?

Does it help stackholders to take decisions? Can it impact business? How are you going to measure its performance, its business impact on the whole?

How to know what’s going on with it?

- How to monitor the input, output, insides?

It’s nice to have a tool that runs smoothly. But it’s nicer to have some intelligence about its metrics (cpu/memory/IO, and internals), to know how the tool perform, what is its impact on the system, on the other tools. Are you at its limit? Can it handle more?

You must always measure the internals, the ins and the outs of any system you use.

- Does it generate proper logs you can push inside a log analysis system?

Any backend piece generates some logs. Can you process them into a logs management system such as logstash to be visualized into a dashboard à la Kibana? That’s a very important feature. A chart can instantly reveals abnormalities at first sight.

- Check the memory evolution across a long period of time, to see if there aren’t any memory leak (that happens).

Before releasing something into production, make sure it does not contain any memory leak. It happens when you don’t test enough. Run some benchmarks, stress-test it, and monitor its metrics. That must be part of the delivery process.

- If your monitoring fails, what is going to happen?

Let’s say the monitoring/logging tool failed for some reasons but the tool is still running. Is it going to impact the business? (let’s say some people were relying on it)

- Does it need a lot of disk space?

Generally, you will know it. But it really has to be think ahead and estimated. You wouldn’t like to stop the system just to upgrade drives. Plan for years. Ask yourself : how much disk space will it eat up each month?

- Is someone is going to be in charge of maintaining it ?

It’s running, everything is fine. Do you need an expert to maintain it? Or the monitoring is enough and you can wait for Prometheus alerts to warn you?

Should it scale to infinity and beyond?

- Do you need it to scale?

We often talk about scaling, but do you need it? Can you plan how the quantity of data used by the tool is going to evolve? Are you going to keep all the data or you can just wipe the 1-month old ones? What about the CPU and memory, do you feel the tool is going to ask for more and be bound?

If you don’t need it to scale, skip the following.

- Does it scale horizontally and/or vertically ?

Scaling out is different and can be cheaper than scaling up. Nowadays, we prefer to scale out by simply adding more servers instead of a big powerful machine. That’s especially more useful to failover gracefully. If you have a big ass machine and it dies, you’re done.

- Do you need it to be elastic ?

If you have a seasonal business, then you probably want your servers to be elastic, i.e.: to scale according to the load. No load, no scale, 1 server. Big load, big scale, 10 servers. All that, automatically, à la Amazon EC2. Does the tool can handle hot-add/remove of instances?

- Does it have dependencies to be able to scale?

Some tools embed their own clustering solution to sync peers, such as MongoDB (config servers). Most tools rely on an external system to do it, such as Zookeeper. That dependency also need to handle failover situation, otherwise it becomes a SPoF.

- Does it scale linearly and proportionally? (adding one server other another should add almost the same throughput as the first server)

The best scaling out operation is when adding a new node improve linearly the total throughput of the cluster (meaning there are no loss due to more clustering/syncing operations), and no sub-linearly. eg: You have one node, the throughput is 100. You have 2 nodes, the throughput is 150 and with 4 nodes the throughput is 200, it’s not that good (Amdahl’s law). The best would be 4 nodes = 400.

Does is fit your performance budget?

- Can you check for performance bottleneck? Add sampling on the fly?

- Do we have enough performance for production?

- What about the future, in one year, could you evolve it easily?

- Can you adapt the performance on the fly or do you need to restart?

- Can you test it? Can you benchmark it? Is it stable across test? Can you save metrics to compare for later?

- Does it has to work all the time?

- Can you preprocess or precompute some parts before it handles it?

- Is your average response time is going to be impacted?

- Do you need Pro SSDs or classic HDDs are fine?

When it will fail, is everything gonna be alright?

- For some reasons, the process crashes or fails to do its job properly. Do you know what to do?

- Can it failover easily?

- If it has failed, can it recover quickly? Automatically? Is a human decision is needed?

- Are you going to lose data during a failover? Or are they going to be buffered somehow or taken into account later? (as a continuous stream)

- Are you sure you won’t have any duplicated data after a failover?

- Can you live for a while if the tool is not working?

- Can you restart from a failed state? Do you have to do some (manual) cleaning? (like dependencies Zookeeper, physical folder, HDFS…)

Backup

- Does it have its own backup strategy?

- Can you backup its data regularly somewhere else?

- Can it be replicated?

- Do you care about history?

Upgrade

- If it restarts, will it work, or are you going to be in trouble? (missing data, can’t do proper reports? can’t bill customers?)

- Can you do rolling restart to always be up? Without losing data?

—

If you answer all those questions :

Thanks for reading!

This was a draft I had since years. It was a shame to not publish it under its current form, full of gifs. I just cleaned it up, smoothed it out, and published it for you. ❤